The expansion of the above equation typically yields some terms that do not depend on the model parameters and may be discarded. The specific form of the cost function changes from model to model, depending on the specific form of log pmodel. The total cost function used to train a neural network will often combine one of the primary cost functions described here with a regularization term. Specialized loss functions allow us to train a predictor of these estimates. Sometimes, we take a simpler approach, where rather than predicting a complete probability distribution over y, we merely predict some statistic of y conditioned on x. This means we use the cross-entropy between the training data and the model's predictions as the cost In most cases, our parametric model defines a distribution p(y | x θ) and we simply use the principle of maximum likelihood. *SVMs have a nice dual form, giving sparse solutions when using the kernel trick (better scalability) Cost Functions for NN? Fortunately, the cost functions for neural networks are more or less the same as those for other parametric models, such as linear models. *SVMs don't penalize examples for which the correct decision is made with sufficient confidence. *LR can be (straightforwardly) used within Bayesian models. *LR gives us an unconstrained, smooth objective.

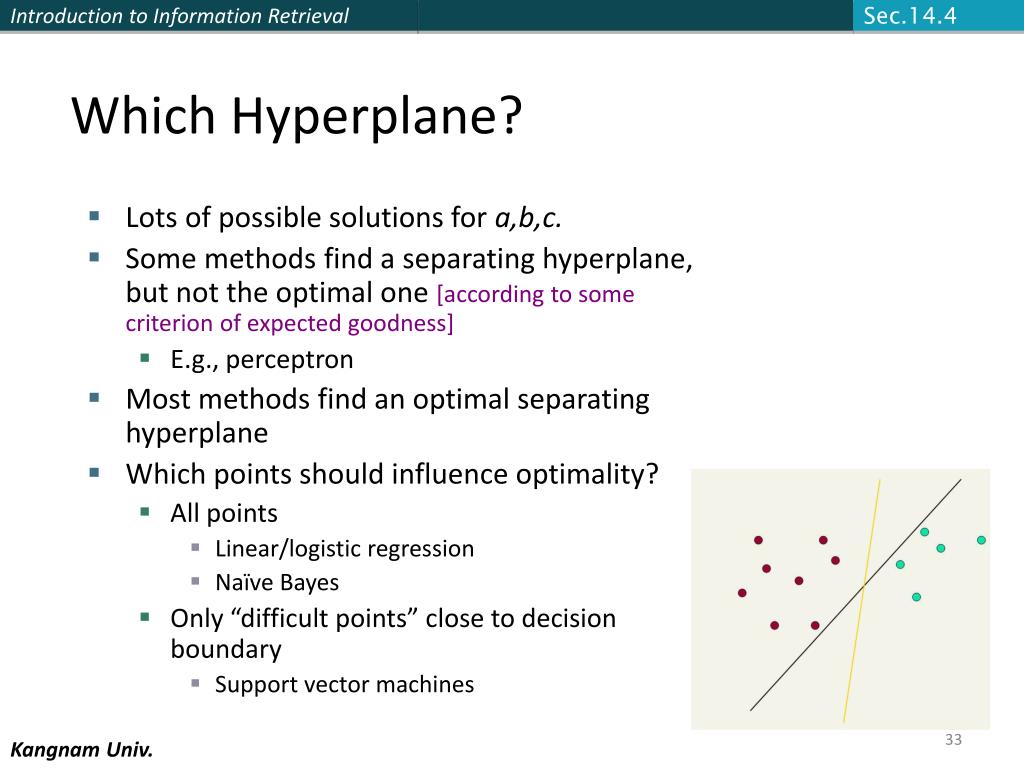

*LR gives calibrated probabilities that can be interpreted as confidence in a decision. SVMs: When to use which one? Unlike logistic regression, the support vector machine does not provide probabilities, but only outputs a class identity. Your input is simply now twice as large as before (the two feature vectors concatenated together) and the class labels are "same" and "not same" Logistic regression vs. Second, the kernel function k often admits an implementation that is significantly more computational efficient than naively constructing two φ(x) vectors and explicitly taking their dot product.Ĭan any similarity function be used for SVM? Yes, they can - you are describing a new classification problem. This is possible because we consider φ fixed and optimize only α, i.e., the optimization algorithm can view the decision function as being linear in a different space. First, it allows us to learn models that are nonlinear as a function of x using convex optimization techniques that are guaranteed to converge efficiently. The kernel trick is powerful for two reasons. Rewriting the learning algorithm this way allows us to replace x by the output of a given feature function φ(x) and the dot product with a function k(x,x(i)) = φ(x) For example, it can be shown that the linear function used by the support vector machine can be re-written as mw x + b = b + αixx(i), where x(i) is a training example and α is a vector of coefficients. The kernel trick consists of observing that many machine learning algorithms can be written exclusively in terms of dot products between examples.

One key innovation associated with support vector machines is the kernel trick. I would suggest using cross validation for choosing it. Now your parameter □ will decide how you want to handle outliers. □ being equal to infinity is the case for hard margin. proportional to the amount by which each data point is violating the hard constraint).Now if we increase □, we are penalizing the errors more. It lies inside the margin, a penalty is added (linear i.e. It lies on the margin, then it is a support vector. It lies beyond the margin (in its area of classification) and doesn't contribute to loss. There can be three cases for a point □(□) 1. This approach gives linear penalty to mistakes in classification. So instead of minimizing 1/2||□||^2 (img) now □ helps us in allowing some slackness in constraint. In the presence of outliers you need to use a more general version of Support Vector Machine that is with soft margins. The margin will shrink and the decision boundary will be suboptimal resulting in poor classification. Outliers have the capability to make your model poor.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed